In the days since the U.S. presidential election the scourge of fake news has been tearing at the soul of Internet media. An interview with a satire news website editor published by The Washington Post on November 17th discussed how shares of fake news stories on social media platforms may have had a real effect on the recent election. Fake news websites have been a lucrative business model, too. A separate Washington Post article published the day after the aforementioned interview reports that fake news sites can earn thousands of dollars each month in advertising revenue through programs like Google’s AdSense.

In the days since the U.S. presidential election the scourge of fake news has been tearing at the soul of Internet media. An interview with a satire news website editor published by The Washington Post on November 17th discussed how shares of fake news stories on social media platforms may have had a real effect on the recent election. Fake news websites have been a lucrative business model, too. A separate Washington Post article published the day after the aforementioned interview reports that fake news sites can earn thousands of dollars each month in advertising revenue through programs like Google’s AdSense.

In the days after the election, major tech companies have taken steps to ensure that the content on their platforms is factually accurate. Online search giant Google, a subsidiary of Alphabet (NASDAQ:GOOGL), announced that it would attempt to weed out fake news sites from its AdSense program to try and prevent those sites from making money. Mark Zuckerberg, the CEO of social media firm Facebook (NASDAQ:FB), wrote a public post in which he discussed projects undertaken by the company to detect false articles, verify facts and displaying warnings when fake news stories are shared. Facebook in particular has had problems tangling with fake news sites and has even been charged in recent months with using an algorithm which has promoted fake news stories through its Trending News section.

It’s into this uncertain atmosphere that Japanese tech conglomerate Sony Corporation (NYSE:SNE) hopes to rush towards and offer a tangible solution to the problem. On Thursday, November 10th, the U.S. Patent and Trademark Office published a patent application filed by Sony for a system designed to verify the reputation of information sources available through digital channels using a multitude of approaches.

U.S. Patent Application No. 20160328453, titled Veracity Scale for Journalists, would protect a method programmed into a device’s memory which would acquire input from a user regarding an article or a journalist, collating and storing the input in a database, filtering the input to generate filtered data, applying a user-specific filter to that data to generate veracity information associated with the article or journalist and then displaying the veracity information. The veracity system would take into account multiple parameters associated with an information source’s reputation, including historical accuracy, current accuracy, understandability, writing style and bias.

U.S. Patent Application No. 20160328453, titled Veracity Scale for Journalists, would protect a method programmed into a device’s memory which would acquire input from a user regarding an article or a journalist, collating and storing the input in a database, filtering the input to generate filtered data, applying a user-specific filter to that data to generate veracity information associated with the article or journalist and then displaying the veracity information. The veracity system would take into account multiple parameters associated with an information source’s reputation, including historical accuracy, current accuracy, understandability, writing style and bias.

The background section of this patent application notes that people have sought out in the past platforms which are designed not simply to share information but also to provide an objective view of the reputation of a source of information. Angie’s List, for example, is a reputation-based classified advertising-style service in which reviews of various professionals are crowd-sourced in order to determine whether a home improvement worker is worth the quote he or she gives for work. Even Facebook, one of the tech companies at the center of the fake news conflict, enables some form of content review with its system of “likes.”

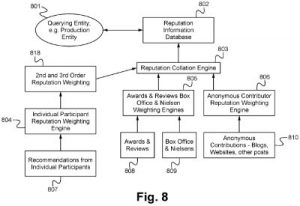

According to Innography’s patent portfolio analysis tools, Sony owns 13 active U.S. intellectual property assets related to reputation including nine patent grants and four patent applications including the ‘453 patent application. 2016 has seen the publication of three such Sony patent applications related to reputation ranking. One of these is U.S. Patent Application No. 20160189084, titled System and Methods for Determining the Value of Participants in an Ecosystem to One Another and To Others Based on Their Reputation and Performance. This patent claims a similar method programmed into a device’s memory which involves acquiring reputation information of a ratee from a rater on an online system, analyzing the reputation information using a reputation collation engine and generating a reputation index of the ratee based on analyzing the reputation information. The input in this system is weighted based on the credibility of the rater or the proximity of the rater to the ratee.

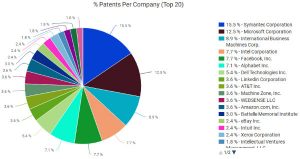

A survey of the U.S. patent landscape, again using Innography, shows that there have been 271 U.S. patents related to reputation issued through most of 2016. The pie chart shows that the top companies in this field are tech companies which invest well in research and development led by Symantec Corporation (NASDAQ:SYMC) of Mountain View, CA, which has earned 26 U.S. patents this year related to reputation. Following

A survey of the U.S. patent landscape, again using Innography, shows that there have been 271 U.S. patents related to reputation issued through most of 2016. The pie chart shows that the top companies in this field are tech companies which invest well in research and development led by Symantec Corporation (NASDAQ:SYMC) of Mountain View, CA, which has earned 26 U.S. patents this year related to reputation. Following  directly after Symantec is Microsoft Corporation (NASDAQ:MSFT) of Redmond, WA and IBM (NYSE:IBM) of Armonk, NY. Tied in fourth place with 13 patents each is Facebook and Intel Corporation (NASDAQ:INTC) of Santa Clara, CA. As the text cluster posted here shows, there are sizable subsets of this patent sector which are focused on social media and social networks.

directly after Symantec is Microsoft Corporation (NASDAQ:MSFT) of Redmond, WA and IBM (NYSE:IBM) of Armonk, NY. Tied in fourth place with 13 patents each is Facebook and Intel Corporation (NASDAQ:INTC) of Santa Clara, CA. As the text cluster posted here shows, there are sizable subsets of this patent sector which are focused on social media and social networks.

There are plenty of issues which make it difficult to do the job of proving the accuracy of online sources of news which become viral at the social media layer. Facebook is a poster child for many of these. This May, we reported on Facebook’s poor management of its human Trending News curators leading to extremely poor workplace conditions where anti-conservative bias was allowed to seep in, prompting conservative groups to accuse Facebook of de facto censorship. Facebook decided to address the issue by getting rid of the human bias of its employees by replacing them with a Trending News algorithm meant to promote news articles, but media reports were pointing out a rise in fake news stories being promoted by Facebook by the late summer. One problem is the current state of development in the artificial intelligence sector, where natural language processing technologies are able to solve simple question-and-answer scenarios and lack the ability to understand satire.

Another issue which could make this problem even thornier is perhaps endemic to the entire world of social media. Consider the game of “Telephone” in which people sit in a circle and one person whispers a phrase into a second person’s ear, and the second person then whispers it to a third, and so on until the circle is complete and the original message returns to the person who started it. More often than not, that phrase has completely changed because, somewhere along the way, someone misinterpreted it. Now consider the recent story of a communications professor from Merrimack College who compiled a list of fake news sites. When it hit social media, that list went viral out of an honest, well-natured intention to inform others of misleading news sites. That professor ended up taking the list down from her own social media pages over concerns that the list itself could be misleading because it contains different categories of fake sites (i.e.: satire, factual but highly subjective, etc.) which the professor thought people were misinterpreting. Social media continues to pave the road full of good intentions, and yet we continue ending up in an unsavory destination.

![[IPWatchdog Logo]](https://ipwatchdog.com/wp-content/themes/IPWatchdog%20-%202023/assets/images/temp/logo-small@2x.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2024/04/Patent-Litigation-Masters-2024-sidebar-early-bird-ends-Apr-21-last-chance-700x500-1.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/WEBINAR-336-x-280-px.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/2021-Patent-Practice-on-Demand-recorded-Feb-2021-336-x-280.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/Ad-4-The-Invent-Patent-System™.png)

Join the Discussion

No comments yet.