“Not all declared patents are essential and not all essential patents are declared. Both described scenarios show that patent declaration data needs refinement, filtering, extrapolation and a neutral and objective SEP determination and valuation metric.”

One of the major challenges when licensing, transacting, or managing Standard Essential Patents (SEPs) is that there is no public database that provides information about verified SEPs. Standard-setting organizations (SSOs) such as ETSI (4G / 5G), IEEE (Wi-Fi), or ITUT (HEVC/VVC) maintain databases of so-called self-declared patents to document the fair, reasonable and non-discriminatory (FRAND) obligation. However, SSOs do not determine whether any of the declared patents are essential, nor are the declarants required to provide any proof or updates. As a result, in the course of licensing negotiations, patent acquisitions, or litigation, the question about which patents are essential and which are not is one of the most debated when negotiating SEP portfolio value, royalties, or infringement claims. Artificial Intelligence (AI) solutions have started to support the process of understanding how patent claims relate to standards to assess larger SEP portfolios without spending weeks and months and significant dollars on manual reviews by technical subject matter experts and counsel.

One of the major challenges when licensing, transacting, or managing Standard Essential Patents (SEPs) is that there is no public database that provides information about verified SEPs. Standard-setting organizations (SSOs) such as ETSI (4G / 5G), IEEE (Wi-Fi), or ITUT (HEVC/VVC) maintain databases of so-called self-declared patents to document the fair, reasonable and non-discriminatory (FRAND) obligation. However, SSOs do not determine whether any of the declared patents are essential, nor are the declarants required to provide any proof or updates. As a result, in the course of licensing negotiations, patent acquisitions, or litigation, the question about which patents are essential and which are not is one of the most debated when negotiating SEP portfolio value, royalties, or infringement claims. Artificial Intelligence (AI) solutions have started to support the process of understanding how patent claims relate to standards to assess larger SEP portfolios without spending weeks and months and significant dollars on manual reviews by technical subject matter experts and counsel.

Limitations of SEP Declaration Data

As Justice Birss concluded in Unwired Planet vs. Huawei, “…in assessing a FRAND rate counting patents is inevitable”. However, SEP declaration counting is typically subject to two limitations:

- Maximal declaration situation

SSOs such as ETSI (the organization that specifies 4G/5G standards) encourage standards developers to declare any patent that could potentially be essential for standards. A few declaring companies conduct claim charts before declaring patents most others declare any potential patent without any in-depth analysis. Also, often companies submit patent declarations when patents are yet pending, and the standard is still evolving. Thus, patent claims as well as standards specifications are likely subject to change after the initial declaration. By design of the declaration practice some of these declared patents end up being not essential. Publicly self-declaring all potentially essential patents for a given standard is an important part of the FRAND obligation and should not be called “over declaration”. Still such patent declarations must not be confused with verified SEPs as a good share of the declared patents is not essential.

- Minimal declaration situation

Other declaration database such as IEEE (the organization that specifies Wi-Fi) and ITU (the organization that specifies HEVC/VVC) allow patent owners to submit so-called blanket declarations, where declaring companies must not declare specific patent numbers but only submit a blanket statement without any further details about potentially essential patents. By design of the blanket declaration practice these databases provide no information about the magnitude of SEP ownership across companies. In other words, there is no transparency about a declaring company owning e.g. just a single SEP or several thousands of SEPs.

To summarize: There are two big problems. Not all declared patents are essential and not all essential patents are declared. Both described scenarios show that patent declaration data needs refinement, filtering, extrapolation and a neutral and objective SEP determination and valuation metric. In the past, SEP essentiality determination was solely conducted by subject matter experts (SMEs) who mapped and charted patent claims and standards sections. However, there is no practical way for humans to determine patent essentiality for large populations of declared patents. Not only are there too many patents (IPlytics counts over 300,000 world-wide declared patents) but it is rare for e.g. two different experts to agree on the other’s approach to mapping patents to standardized technologies an any claim chart is biased towards the company commissioning the claim charting work.

Limitations of Human SEP Determination

The TCL v Ericsson case is a good example of the limitations when employing human experts to count, valuate and determine the overall essentiality rate. In this litigation, Ericsson and TCL argued about the quality and essentiality rate of the Ericsson SEP portfolio compared to the overall number of 2G, 3G and 4G SEPs. TCL commissioned subject matter experts to conduct a study of a random sample of 2,600 ETSI declared 2G, 3G and 4G patents to determine the essentiality rate. The procedure of essentiality assessment received several criticisms. It was calculated that the commissioned experts must have spent on average only about 20 minutes per patent and charged on average $100 per patent for their assessment. The time spent and amount paid for SEP determination for this litigation case very much differed to fees charged to verify SEPs e.g. in the course of determining patent pools licenses. Most experts would thus agree that it indeed is reasonable to question whether a human can map a patent against complex technical specifications that may have up to 600 pages and hundreds of sections in just 20 minutes. Another even more prevailing criticism, however, was the bias of the experts who conducted the patent mapping. The experts retained by TCL knew which side they were on. This case shows that human SEP determination is subject to two main drawbacks:

- The budget and time needed to thoroughly map and chart tens of thousands of declared SEPs to complex standards such as 2G- 5G, Wi-Fi or HEVC(VVC is often economically not feasible.

- Human experts are biased towards the party that sponsors the analysis.

The latest technical advances in AI-based algorithms allow machines to assist the work of subject matter experts by extrapolating given claim charts to larger samples of data. While AI-based SEP determination may not be as accurate as an expert spending hours or days over every patent, it has the advantage of repeatability, scalability and objectivity. A sophisticated AI algorithm can determine essentiality in milliseconds, whereas an expert will require days or sometimes weeks and months to come to the same decision.

The Complexity of SEP Data

One reason why human SEP determination is both costly and time consuming is the complexity of the standardized technology. Standards such as 5G consist of over a thousand so-called technical specifications (TS). These TS may have up to 600 pages and hundreds of so-called sections. To identify if a declared patent relates to a standard, experts must study and understand all patent claims and map identified claim elements against all possible standards sections. Even more, one patent may be declared to several standards documents that also must be considered when mapping the patent claims. The following data example well illustrates the complexity of data:

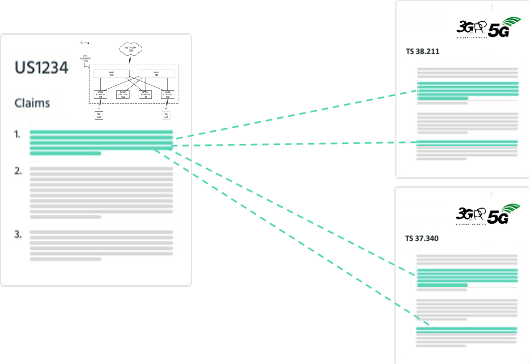

Figure 1: SEP declaration to multiple standards (ETSI SEP database example):

Figure 2: Combination of declared SEPs and standards (ETSI SEP database example):

Source: IPlytics

Figure 1 and figure 2 well illustrate that the number of patent declarations submitted to multiple standards documents creates almost two million combinations when only considering the ETSI declaration data. And as each ETSI standards specification has on average 212 so-called standard sections per document, and each declared patent on average 20 claims, the number of claim section combination 1,778,400 x 212 x 20 exceeds over 7.5 billion combinations of declared patents’ claims and standards sections combinations. Such amounts of data are impossible for humans to analyze and not economically feasible to put in the hands of expert teams that must work for months or even years to determine the essentiality of the patents. This data example shows that even if recent policy makers suggest involving patent offices in the claim charting exercise, there will never be enough budget and human capacity to chart all world-wide declared patents. Especially since the number of patent declarations is sharply increasing by ten thousands of yearly newly declared patent families.

Computer-Based Patent Essentiality Scoring

In computer science, an inverted index is a database index storing a mapping from content, such as words, in a set of documents. The purpose of an inverted index is to allow fast full-text searches and text comparison, at a cost of increased processing when a document is added to the database. Inverted indexing is the most popular data structure implemented in document retrieval systems used on a large scale, for example, in Internet search engines. Indexing, searching and comparing even billions of data points, such as patent claim and standard section data, can be conducted in milliseconds when the index is deployed on highly scalable cloud computers. State of the art semantic algorithms use techniques where documents are represented as vectors in term spaces, allowing comparing the actual content of a patent claim and standard section rather than the overlap of keywords (figure 3).

Claim language and language in standard specifications are often very different: patent claims are drafted by patent attorneys using broad terminology so that the claims apply to as many applications as possible. Standard specifications are written by technical engineers that develop the standard and use very specific language. To overcome this, semantic models are trained with human-created claim chart samples to understand the context of claims and standards where the algorithms can learn to recognize different expressions for certain concepts of patent claim elements. In machine learning, semantic analysis of a corpus is the task of building structures that approximate concepts from a large set of documents where the index is trained on a smaller set of training data.

Figure 3: Semantic claim sections analysis

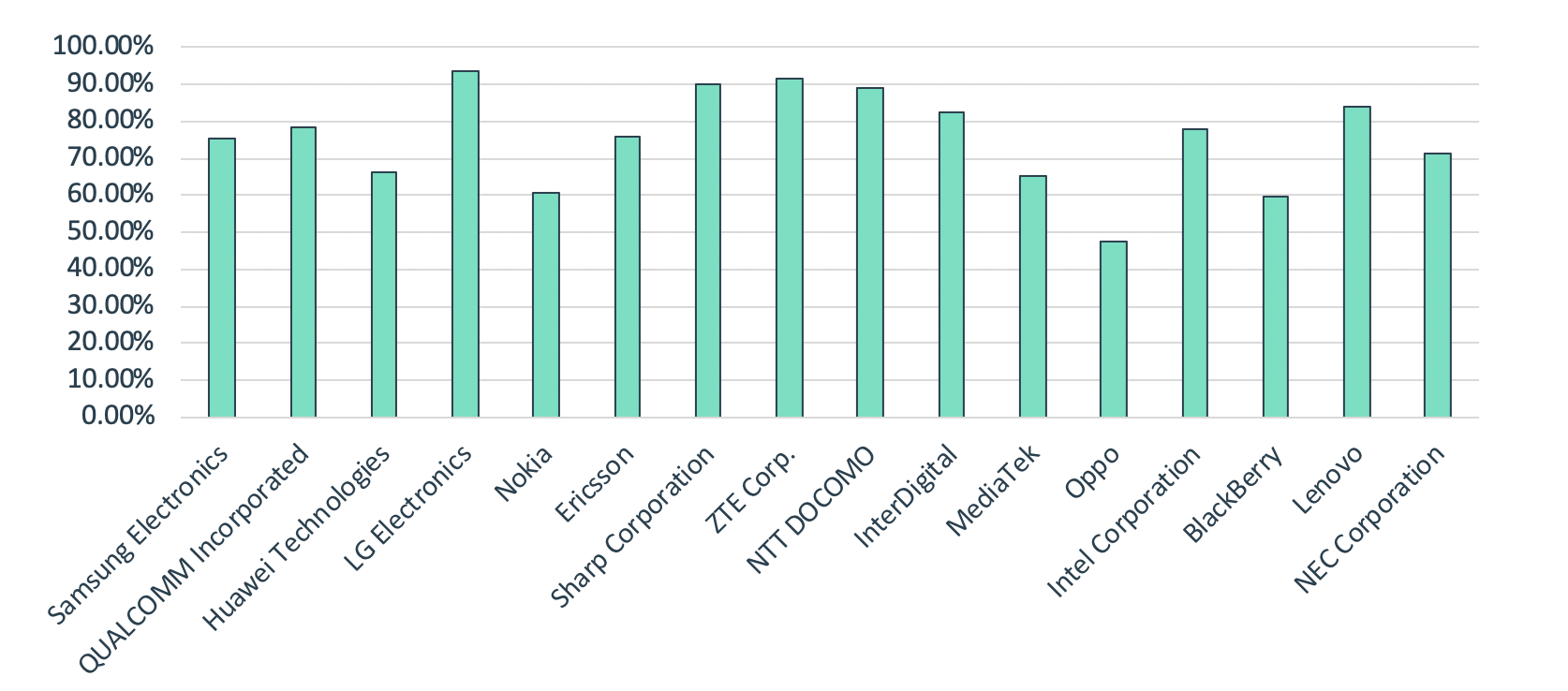

In addition to the semantic comparison of patent claims and standards sections, computer-based algorithms can extend the patent and standard data correlation by mapping the patent’s listed inventors (name, surname, affiliation) to the participation at corresponding standards meetings or by mapping the patent’s applicant/assignee’s accepted standards contributions that relate to the declared standard. A peer-reviewed article written by economists provides evidence that the patent intensity (of later declared patents) in related pre-standards meeting periods is 2.6 times higher than that in the idle period between the meetings. The researchers find that this effect is highest for participating firms when the inventor was present at the meeting. Figure 4 further provides evidence of the cross correlation of patent inventors and standard meeting participation. In figure 4, the IPlytics Platform was used to cross corelate the inventor participation at 3GPP (3rd Generation Partnership Project) meetings for 5G declared patent portfolios. The analysis shows that for, on average, 72% of all 5G declared patents, the inventor (first name, last name, entity) participated at the relevant 5G 3GPP standards meeting where the declared TS was discussed.

Figure 4: Top SEP declaring companies as to share of sending at least one listed inventor of the declared patent to the relevant working group

AI-Based SEP Determination to Support Decision Making

AI-based semantic claim section comparisons and cross correlation of inventor participation and the submission of accepted technical contribution at standards meetings are strong indicators of patents being relevant to a given standard and can be integrated as features in AI-based SEP prediction models that score patents as to their likelihood of being standard essential. Making use of verified SEP training data from expert claim charts allows extrapolating information about essentiality to a much larger set of patents. This allows valuating and determining large patent portfolios that are economically not feasible to be manually mapped by experts. Furthermore, AI-based SEP prediction models allow estimating the likelihood of SEP essentiality for patents that have not even been declared due to blanked declaration statements. While AI-based SEP determination may not replace the work of experts, it supports valuating and determining essentiality of SEPs for various use cases:

- Patent portfolio manager use AI-based SEP determination to valuate their own portfolio in comparison to competitor portfolios with regards to essential assets.

- Patent licensing manager use AI-based SEP determination to understand the value and relevance of a licensed patent portfolio with regards to standards.

- Patent transaction manger use AI-based SEP determination to identify and valuate SEP portfolios for patent acquisition purposes – to understand what can likely commercialized and what rather not.

- Economists use AI-based SEP determination to valuate a potential SEP portfolio share in course of a top-down analysis – to calculate the numerator and denominator.

![[IPWatchdog Logo]](https://ipwatchdog.com/wp-content/themes/IPWatchdog%20-%202023/assets/images/temp/logo-small@2x.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2024/04/UnitedLex-May-2-2024-sidebar-700x500-1.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2024/04/Artificial-Intelligence-2024-REPLAY-sidebar-700x500-corrected.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2024/04/Patent-Litigation-Masters-2024-sidebar-700x500-1.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/WEBINAR-336-x-280-px.png)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/2021-Patent-Practice-on-Demand-recorded-Feb-2021-336-x-280.jpg)

![[Advertisement]](https://ipwatchdog.com/wp-content/uploads/2021/12/Ad-4-The-Invent-Patent-System™.png)

Join the Discussion

15 comments so far.

Tim Pohlmann

July 5, 2021 07:46 am#14 Thanks for your kind words and I agree with everything you write. Indeed, there are yet many out there who clearly have not been part of the SEP discussion in the past 10 years but seem have an opinion on this now. I guess we will need to keep educating them. SEPs will become relevant outside of the smartphone world and thus other industries with yet little knowledge will have to adapt and understand how to deal with SEP essentiality determination, same as with SEP portfolio management or validity related searches. Our webinars are contributing to this continuous education: https://www.iplytics.com/de/webinars/upcoming/ and the IPlytics Platform database is contributing to increased transparency in the market.

Concerned

July 2, 2021 04:27 pm@Tim Pohlmann #12 you are very kind and professional to provide thoughtful comments in reply to the other posts – not sure some of the comments deserve a reply though as the author makes it obvious they never participated in a standards meeting.

Your product has moved the state of the art forward and you should be commended for this. No doubt patent examiners (and many others) would be great beneficiaries of your companies AI tools during patent examination to assist with prior art searching as patents are sometimes granted with priority dates after a published standard.

Anon

June 23, 2021 11:46 am“It is in my view not the standard setting organizations responsibility to determine essentiality of self-declared patents by its members”

We will have to agree to disagree on this fundamental point.

Without agreement on this point, we will not see eye to eye on this topic.

Tim Pohlmann

June 22, 2021 07:06 am@Aon #3

Thanks for your comments and kind words.

I do know for a fact that SEP owners very strategically align the process of their invention disclosures coming from the internal standards developing department with the internal patent board and patent prosecution team to make sure to file valid and essential patents right before contributions are submitted at the standards body. However, in standards development submitted contributions by member companies are approved and accepted because of the technical merit and here it does not matter if these are subject to patents or not. Typically, in a consensus decision making process with other market players that are often competitors. Again I also know that any possible invention in course of standard development is filed as a provisional application making sure if the proposed technology becomes part of the final standard it becomes a valid and essential patent. Read my latest article for further clarification: “Standards Contributions as Means to File Essential Patents”: https://ipwatchdog.com/2021/05/15/unpacking-5g-seps-standards-contribution-data/id=133530/

Also, I do not think there is a dereliction in duty. It is in my view not the standard setting organizations responsibility to determine essentiality of self-declared patents by its members. In general, I believe it should be not one authority to decide and determine standard essentiality of patents, but the market should self-regulate. Any patent owner who seeks royalties needs to provide evidence that the to be licensed SEP portfolio really is standard essential.

I also do not believe AI solutions will replace subject matter experts. But AI solution will and already are today supporting SEP determination as we speak as a human cannot map though a portfolio of thousands of patents. Again, join the webinar and you will see from high level speakers how much AI is already used.

@George #11

It is indeed difficult to estimate the value of technologies such as e.g. 5G today where yet the full potential of a connected world that runs on 5G cannot be foreseen. Typically, in patent licensing the licensor and licensee agree on a royalty per sold unit. Let’s say $10 per sold multi-mode smart phone. The more smart phones sold the higher the royalty. If $10 represents the real value of e.g. 5G in a smart phone is a different question.

And yes there are many economist that are experts in estimating the value of what a patented technology brings to the final product. This is a case-by-case analysis. And if someone uses a patented technology without paying royalties, patent owners enforce their patents and there are litigation cases, injunctive relief, triple damage payments and so on.

Anon

June 20, 2021 09:06 amGeorge @ 4, 5, 7, 8, and 9:

At least you kept your inane babble succinct.

Inane it remains, but you spare everyone the “wall of text” effect.

Small steps should be encouraged. 😉

George

June 18, 2021 09:20 pm@Tim Pohlmann

Just wondering from an economics & law point of view, how can patented technologies that have not yet been commercialized (or fully deployed), have their ‘future value’ at least estimated in a way that would be acceptable to courts? How can their value 10 years out be argued in a court of law and has this been successfully done? Are there economists that specialize in doing that, especially for litigation purposes? In other words, what if some company steals a ‘potentially’ super-valuable technology that is either patented (in some form) or held as a trade secret? How is the ‘future’ value of something like that determined, or does litigation have to await clear proof of specific monetary harm? Can the statute of limitations be tolled in such cases (until proof of specific harm is obtained)? What are the best texts on the subject of ‘new technology’ valuation and future valuation?

George

June 18, 2021 09:04 pm@Anon

If you can’t beat’em, join’em . . . or else!

George

June 18, 2021 08:59 pm@Anon

‘Damn those infernal computers!!! – THEY’LL RUIN EVERYTHING FOR US!’ We’ll have to go out and find real work again!

George

June 18, 2021 08:51 pm@Anon #5

“As I stated, the problem is NOT a lack of technology for the task. The problem is a lack of attention to the task.”

Translation: Blah, blah, blah!!!

George

June 18, 2021 08:49 pmExcellent points. The practice of patent law will have to be largely replaced by computers & AI in the near future. Societies need to be able to issue ‘valid’ patent rights to their citizens, at a cost that is affordable to all of them, regardless of class or income and those patents should be robust enough to allow speedy adjudication as well (to also performed mostly by computers).

It’s impossible today to be a ‘human’ examiner who is an expert in 100’s of fields and sub-fields. That may have been possible 150 years ago, but not anymore. Also, executing complex logic and producing ‘consistent and accurate analysis’ is far too difficult, time consuming and frankly ‘monotonous’ for humans now. People just can’t do that anymore – there’s way too much knowledge, prior art, cases & decisions needing careful review and comparison.

Only computers can ‘breeze through’ tons of data in minutes (instead of years). In fact, in theory they could consider everything known to man in the time it would take a ‘human expert’ to thoroughly analyze just one patent.

That’s why my firm is ‘all in’ on AI for making patent law accessible to anyone, for a price they can afford. Inventors don’t have time for all the expensive ‘linguistic and legal games’ that are now engaged in for a (lucrative) living, by those wanting to prevent enforcement of even obviously valid patent rights. Even the USPTO strenuously tries to not issue broad patents anymore and that is a very bad thing that just clogs up the courts.

If the PTO issued far fewer but much broad patents, then things would be much better, easier, more efficient and the wealth created by innovation would certainly be much more equitably distributed. Today all the wealth created by innovation goes right back up to the top 1%. That has to change and computers can definitely help change it. Go AI!!!

Anon

June 18, 2021 04:57 pmGeorge,

Please read my posts more carefully.

Whether or not “it’s coming” is quite difference from EITHER “it’s here” or — in the immediate case of this post — whether it is what is pertinent.

As I stated, the problem is NOT a lack of technology for the task. The problem is a lack of attention to the task.

George

June 18, 2021 04:18 pm@Anon

“Again with the AI !!!!” LOL!

Yup – it’s coming – whether you like it or not!

Anon

June 18, 2021 01:49 pm“The reason for that is that some of the standards body members have patented their inventions that later become an essential part of the standard.”

I will disagree with this statement.

The reason is NOT because members may or may not have patented their inventions. You attribute a ’cause’ when such is just NOT a cause.

Any standard set may – or may not – invoke patents, and the presence of patents IS an item that must be disclosed (by any intelligent standards setting body – note that this has NOT always been the case in practice).

What you fully agree to (in regards to my statement) IS the be all and end all of the duty involved.

That duty should be in play no matter what.

Your article is excellent in pointing out that there is dereliction in duty with choices of how to treat patents, but I just do not see the “fit” of AI as anything close to a de facto solution to the dereliction.

The problem is NOT a lack of technology for the task – it is a lack of attention to the task.

Tim Pohlmann

June 18, 2021 10:45 amDear Anon, I do not understand your comment. The job of the standard setting body and its members is to set the standard. I fully agree. Some (not all) standards are however subject to patents. The reason for that is that some of the standards body members have patented their inventions that later become an essential part of the standard. This article is about understanding if patent claims read on a standard specification. Understanding this is important as there are only a databases of self-declared patents available without any information about these patents being essential or not. In the course of patent licensing negotiations, patent acquisitions, or litigation, the question about which patents are essential and which are not is one of the most debated when negotiating essential patent portfolio value, royalties, or infringement claims. This articles targets patent portfolio manager, patent licensing manager, patent transaction manger or economists who are desperately in need of SEP determination to valuate a potential SEP portfolio. This is a real problem in the industry. Why don’t you join the upcoming webinar and listen to the industry experts and their discussion?

Anon

June 18, 2021 08:50 amThis appears to be making things more complicated than is necessary (and seeking a problem for a desired solution to be squeezed into).

The far simpler, more direct, and accountable resolution would be for a standards setting body to do (gasp) their job of SETTING the standards.

“Essentiality” is no different than any other aspect of a standard being set.

Member of these organizations: please speak up and demand accountability.